Introduction

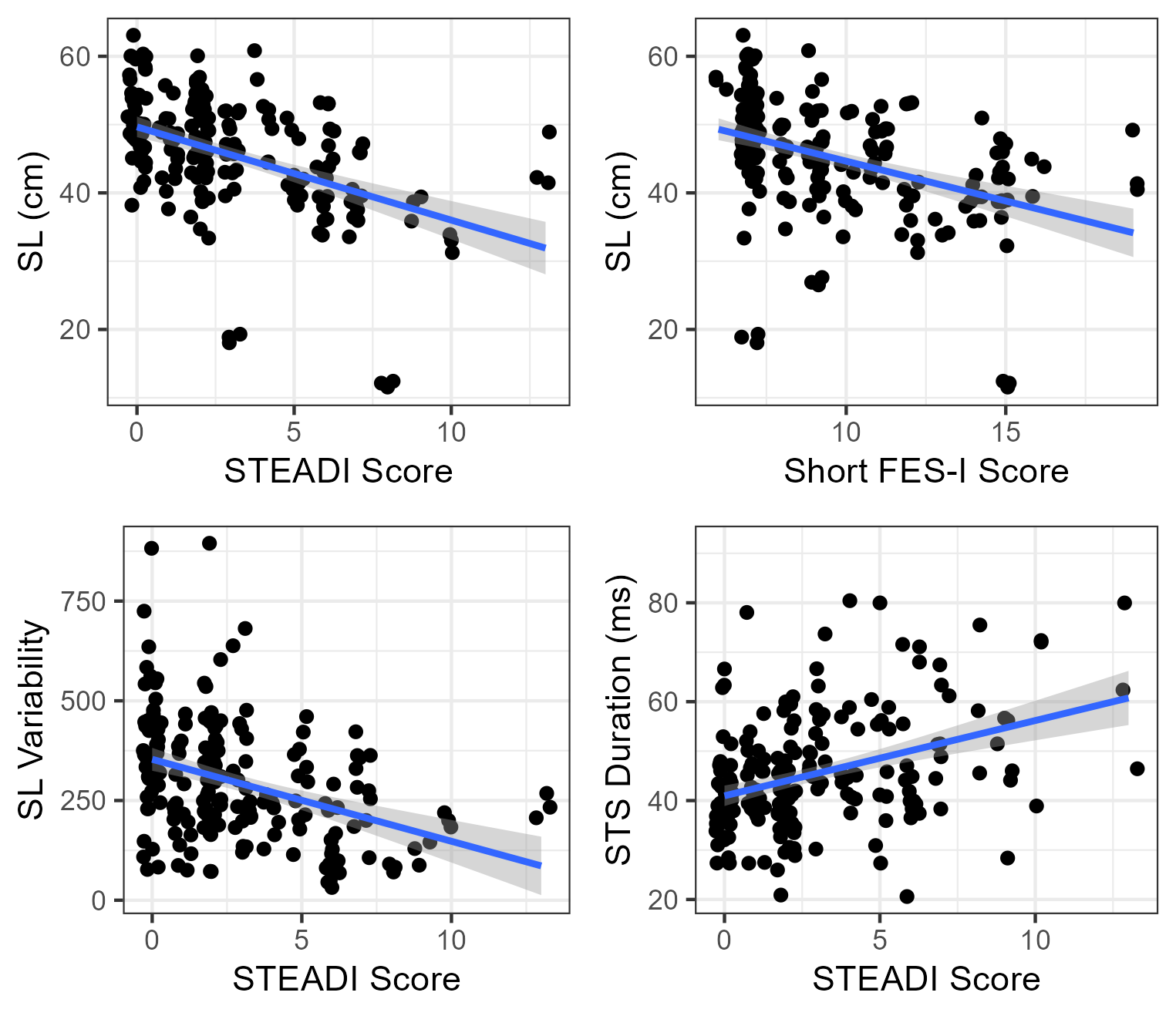

Movement is central to human interaction and well-being across the lifespan. Especially for older adults, movement is an indicator of overall health in the physical, mental, and cognitive domains. As such, gait evaluation is a key clinical measure for identifying older adults at risk for several physiological and pathological aging conditions, commonly used in primary care visits and geriatric assessments to screen participants at risk for frailty or falls.

However, clinical gait evaluation remains largely limited to stopwatch-measured gait speed, as comprehensive assessments are constrained by limited access to technology and specialized training. Although the biomechanical correlates of fall risk are well-established, existing methodologies, such as wearable inertial sensors, optoelectronic marker-based systems, and multi-camera markerless motion capture, require dedicated infrastructure and considerable technical training, restricting their deployment beyond controlled clinical or research settings.

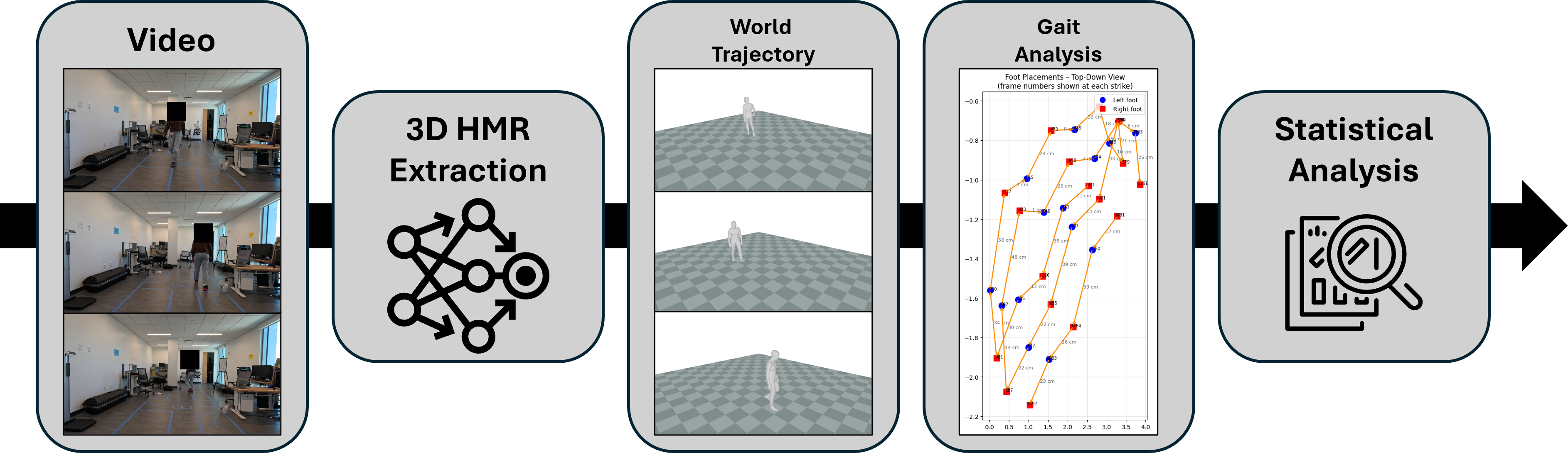

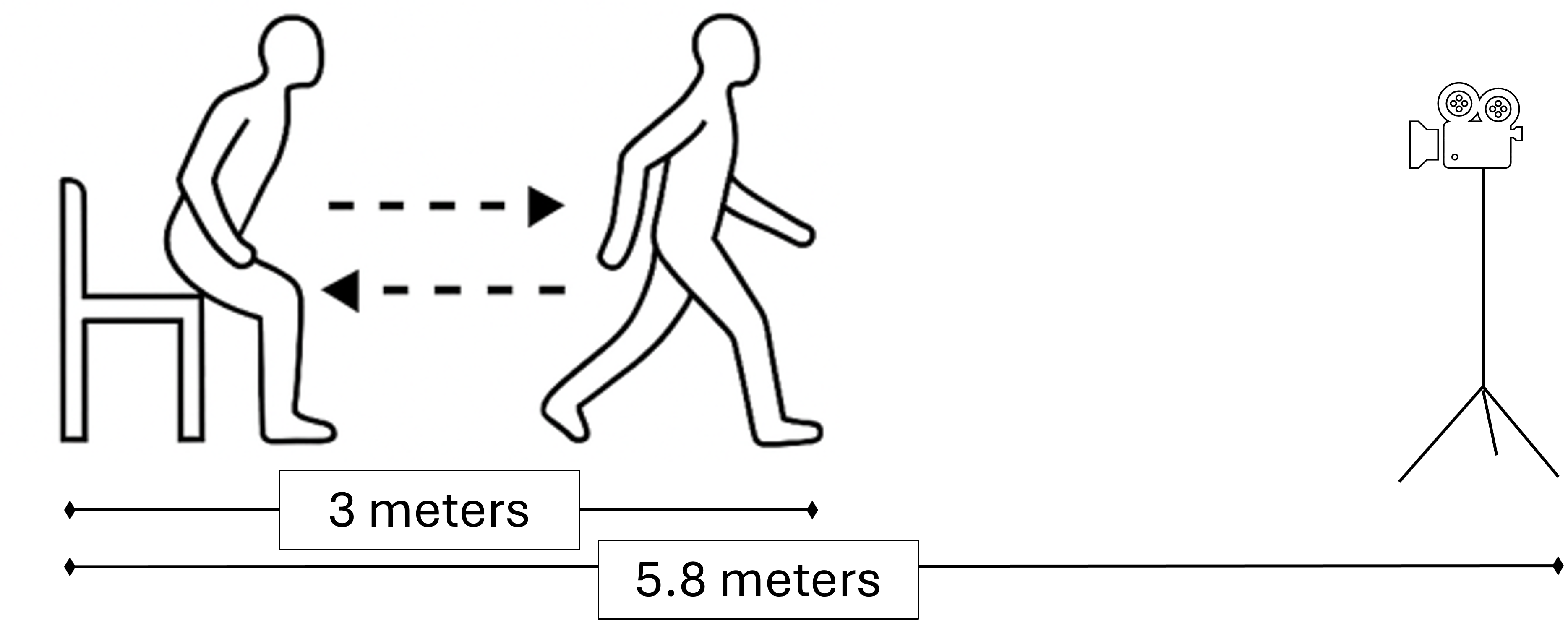

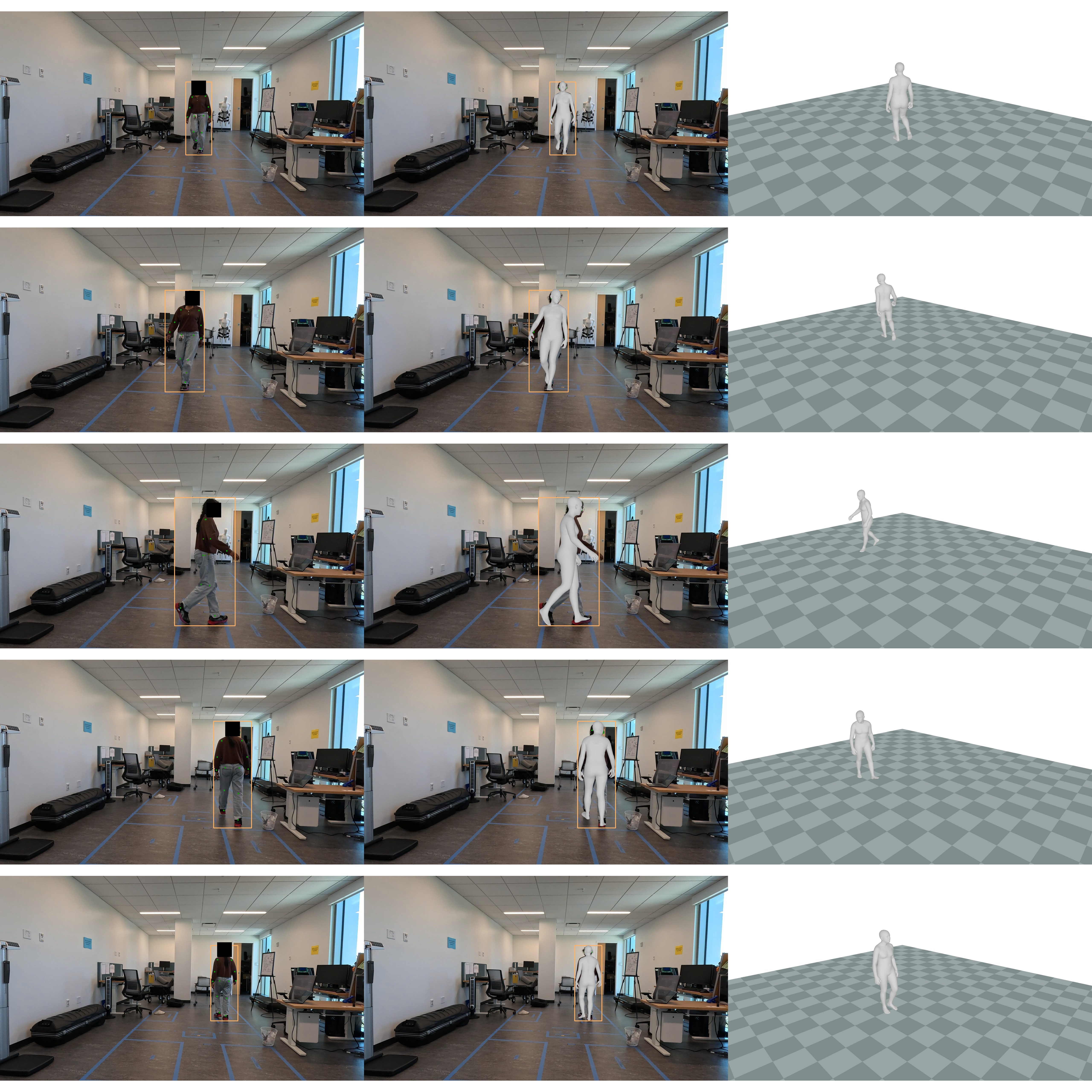

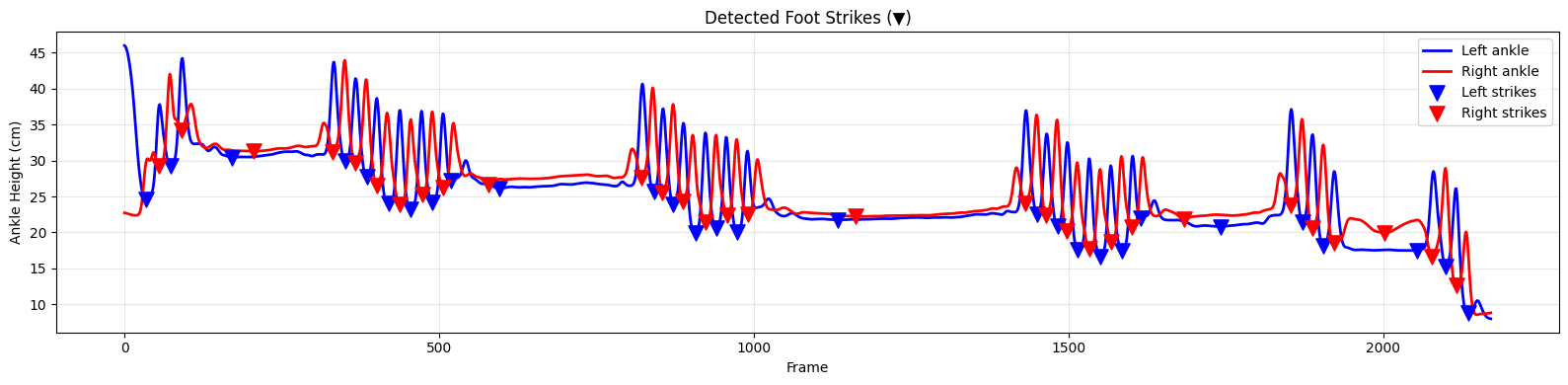

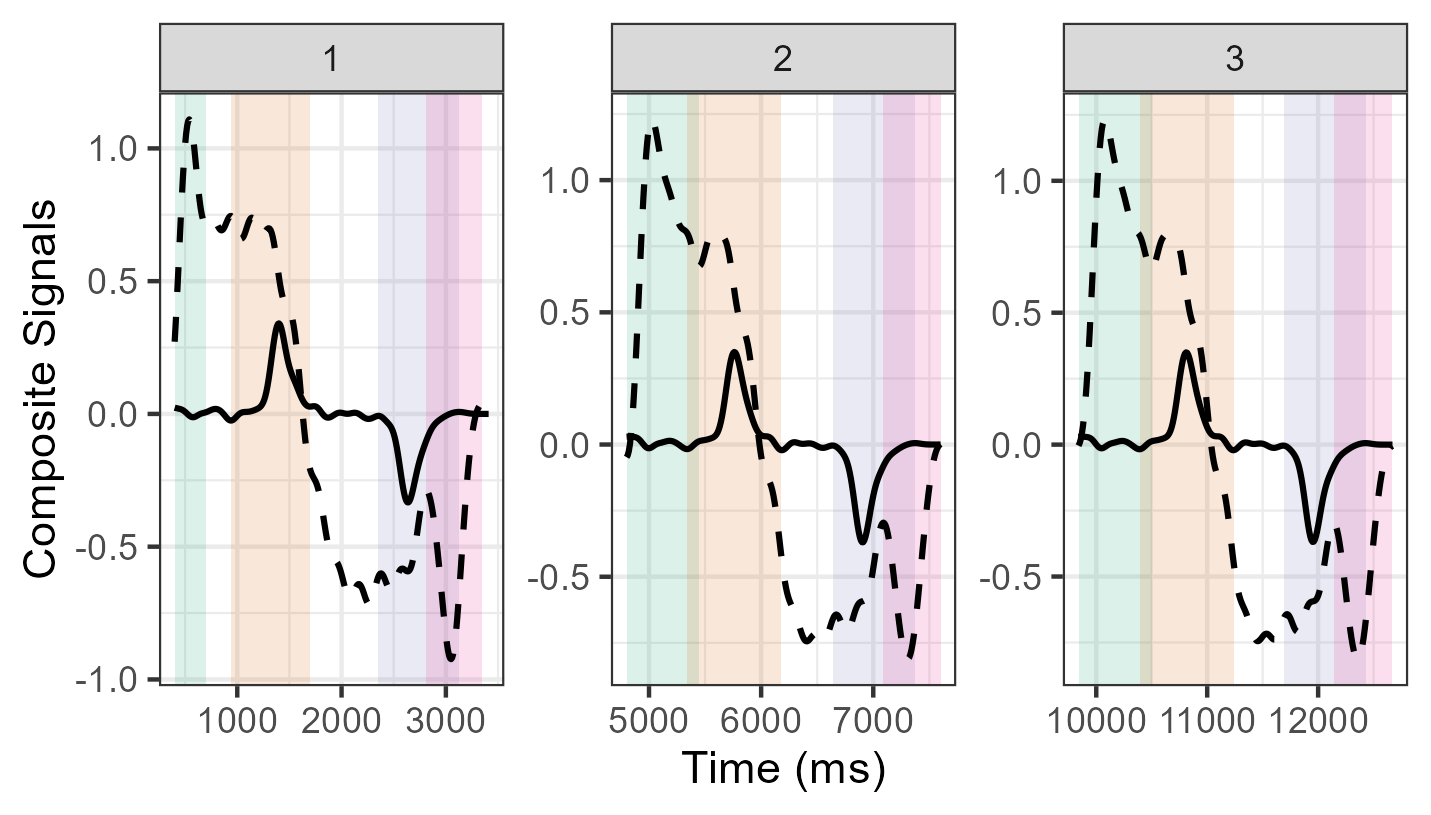

To solve this, we present a novel computer vision-informed approach that evaluates 3D gait measures using a Human Mesh Recovery method, from a single monocular video camera that records the Timed Up and Go test for older adults. We leverage Ground View HMR as the foundation of our pipeline to recover full human body motion anchored in world coordinates.